The 2026 Shift: Why NVIDIA Rubin R100 vs Blackwell B200 Matters

If you feel like you just finished reading about the Blackwell B200, you’re not alone. But in the world of 2026 accelerated computing, a year is a lifetime. The NVIDIA Rubin R100 vs Blackwell B200 debate isn’t just for hardware geeks; it defines how fast the next version of Gemini or Sora will run.

What is the NVIDIA Rubin architecture?

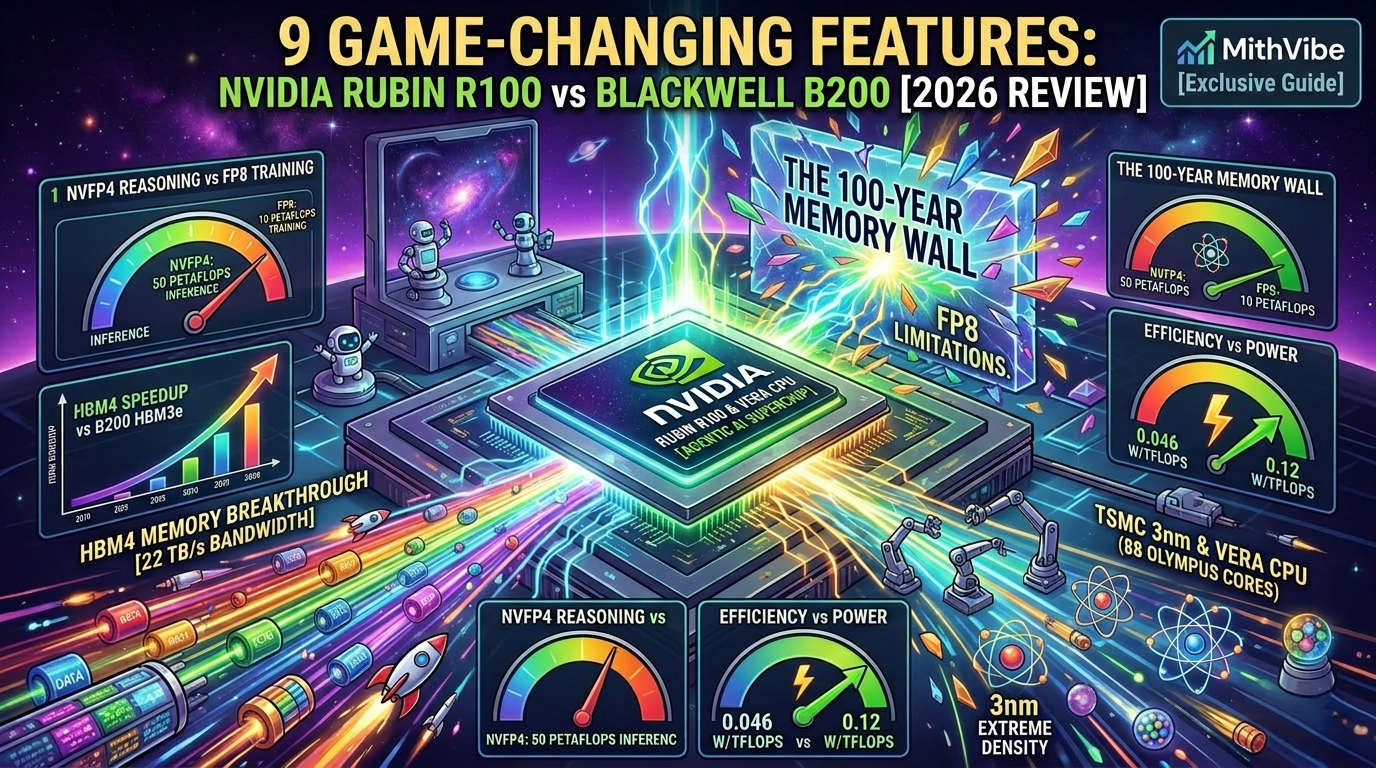

The NVIDIA Rubin architecture is the 2026 next-generation AI computing platform that succeeds the Blackwell series. Built on TSMC’s enhanced 3nm process, the Rubin R100 GPU features 288GB of HBM4 memory and delivers up to 50 Petaflops of NVFP4 compute—a 5x leap in inference throughput over Blackwell.

🤖 Summary: 60-Second Read

- Blackwell is so 2025: The new Rubin R100 isn’t just a refresh; it’s a 3nm architectural reset that makes current chips look like pocket calculators.

- Bandwidth Overdose: We’ve jumped from 8 TB/s to a mind-melting 22 TB/s thanks to HBM4 memory. The “data bottleneck” is officially dead.

- The Brain Swap: NVIDIA ditched Grace for Vera, a custom 88-core CPU built specifically to handle “Agentic AI” (AI that actually does things, not just talks).

The 3nm Revolution: Shrinking the Silicon

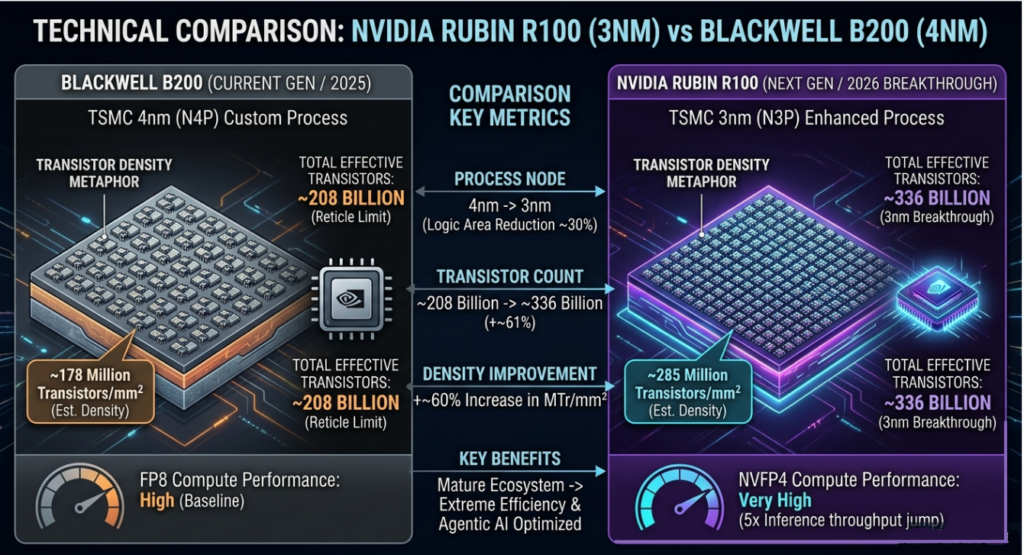

The first major win for the R100 is the jump to the TSMC 3nm (N3P) process. While the Blackwell B200 was a masterpiece on 4nm, it was physically pushing the limits of what a single chip could do.

By shrinking the transistors, NVIDIA has managed to fit 336 billion transistors onto the Rubin die. This isn’t just about “more stuff”; it’s about efficiency. The R100 delivers 3.3x more performance per watt than the B200. For massive data centers, this is the difference between a profitable operation and a massive electricity bill.

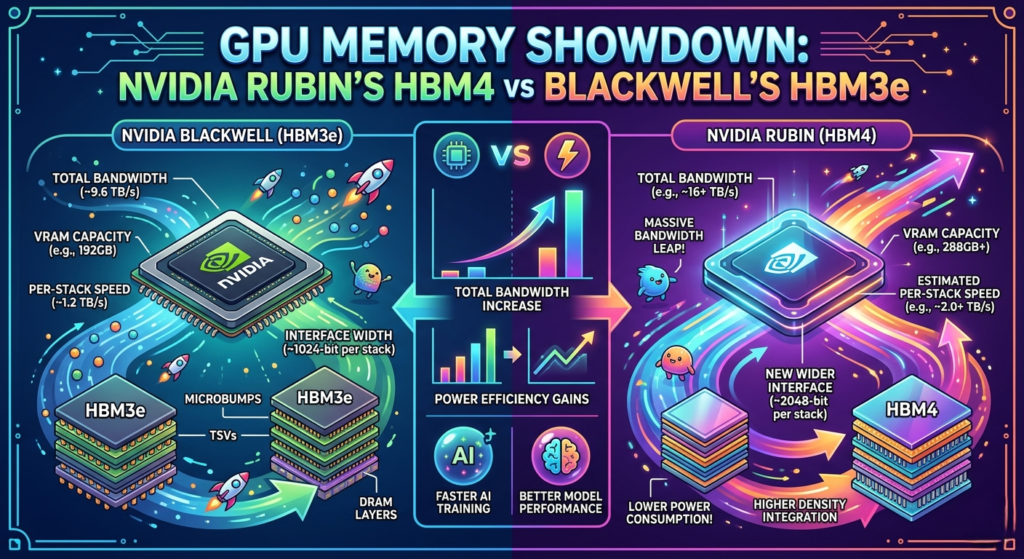

HBM4 Memory: The End of the Data Bottleneck

The biggest “headache” in AI training has always been the Memory Wall. You can have the fastest processor in the world, but if the memory can’t feed it data fast enough, the processor sits idle.

In the NVIDIA Rubin R100 vs Blackwell B200 comparison, memory is the knockout punch:

- Blackwell (B200): Used HBM3e memory with 8 TB/s bandwidth.

- Rubin (R100): Uses the brand-new HBM4 memory with a staggering 22 TB/s bandwidth.

That is nearly a 3x increase. This is why the R100 can handle “trillion-parameter” models with ease, while Blackwell-based systems are starting to sweat.

HBM4 Memory Integration in NVIDIA Rubin GPUs: Breaking the 20 TB/s Barrier

The most significant bottleneck in AI training is no longer raw math speed; it is the “Memory Wall.” If the processor has to wait for data to travel from the RAM, it sits idle, wasting thousands of dollars in electricity every hour.

HBM4 memory integration in NVIDIA Rubin GPUs is the solution the industry has been waiting for. Unlike the HBM3e found in Blackwell, which topped out at 8 TB/s, Rubin’s HBM4 configuration hits an astonishing 22.2 TB/s of bandwidth.

This is made possible by a 2048-bit wide memory interface and Micron’s new 12-high stacked DRAM. For the end-user, this means you can now run a 50-trillion parameter model (like the rumored GPT-5 or Gemini 3 Ultra) on a single rack with zero communication lag.

Vera CPU The 88-Core Olympus Specs Powerhouse

For years, NVIDIA paired its GPUs with the Grace CPU. In 2026, we meet Vera. The Vera CPU 88-core Olympus specs are terrifying for competitors. Unlike standard server CPUs, Vera is designed for deterministic latency.

When an AI agent is trying to browse the web or write code in real-time, it needs the CPU to handle “sandboxing”—running thousands of tiny trial-and-error environments. Vera can sustain over 22,500 sandboxes per rack, which is 4x more than the best x86 processors from Intel or AMD. This makes the Vera-Rubin “Superchip” the undisputed king of Agentic AI.

Vera CPU and Rubin GPU Tight Coupling Performance: The Agentic Advantage

For a decade, the GPU was treated as a “co-processor” to a traditional x86 CPU from Intel or AMD. That era is over. To achieve the Vera CPU and Rubin GPU tight coupling performance required for modern reasoning, NVIDIA has replaced the Grace CPU with the Vera.

Vera features 88 custom “Olympus” cores using Arm v9.2-A architecture. But the “secret sauce” is the NVLink-C2C (Chip-to-Chip) interconnect. This allows the CPU and GPU to share a unified memory pool.

- The Result: The CPU can access the GPU’s HBM4 memory as if it were local L1 cache.

- The Impact: In multi-agent simulations—where an AI has to browse the web, write code, and verify it simultaneously—this “tight coupling” results in a 50% performance boost over traditional Grace-Blackwell setups.

NVFP4 Precision: How Rubin Solves Agentic AI Reasoning

In 2024, we talked about FP8 (8-bit floating point). In 2026, the R100 introduces NVFP4 (4-bit). Using the 3rd Generation Transformer Engine, Rubin uses “adaptive compression.” It essentially “squeezes” the AI model’s data without losing its “intelligence.”

This allows for a 10x reduction in inference token cost. For a company like OpenAI or Anthropic, this means they can serve 10 times more users for the same cost as a Blackwell system.

Quick Specs: NVIDIA Rubin R100 vs Blackwell B200

| Feature | Blackwell B200 (2025) | Rubin R100 (2026) |

| Node | 4nm (TSMC N4P) | 3nm (TSMC N3P) |

| Memory | 192GB HBM3e | 288GB HBM4 |

| Bandwidth | 8 TB/s | 22 TB/s |

| CPU Pairing | Grace (72-core) | Vera (88-core) |

| Inference | 10 Petaflops (FP4) | 50 Petaflops (NVFP4) |

Vera Rubin NVL72: The $3 Million AI Rack

You don’t just buy an R100 chip; you buy a Vera Rubin NVL72 rack. This is a 2,300kg monster that connects 72 GPUs and 36 Vera CPUs using NVLink 6.

The interconnect speed is 3.6 TB/s per GPU. To put that in perspective, that’s more bandwidth than the entire global internet traffic in a single rack. If you’re following our NVIDIA share price forecast 2026, these racks are the reason the “demand moat” is so deep.

Power and Cooling: The Liquid-Cooled Reality

You cannot run a Rubin system with a fan. It is physically impossible. A single R100 GPU pulls 2,300 Watts. The entire NVL72 rack requires Direct-to-Chip (DTC) liquid cooling. For data centers in India, this is a major hurdle. While we discussed the best robot vacuum cleaner in India, the hardware cleaning these AI factories is far more complex.

NVFP4 Precision vs FP8 Training: The Silicon Efficiency War

In 2025, FP8 (8-bit floating point) was the gold standard. It allowed us to train models that were twice as fast as the old BF16 standard. But as we enter the “Rubin Era” of 2026, the NVFP4 precision vs FP8 training debate has taken center stage.

Why move to 4-bit? Every time you halve the precision (from 8-bit to 4-bit), you don’t just “save space.” You exponentially increase the amount of data the chip can process in a single clock cycle. However, the risk was always accuracy. How do you compress a complex thought into just 4 bits without making the AI “stupid”?

The Hardware Breakthrough: 3rd Gen Transformer Engine

The Rubin R100 solves this through Adaptive Compression. Unlike older chips that used a “one-size-fits-all” scaling for the whole model, the Rubin architecture uses Micro-Block Scaling.

- The Math: Data is broken into tiny blocks of 16 elements. Each block gets its own high-precision “micro-scale” (E4M3).

- The Result: The R100 achieves 35 Petaflops of NVFP4 training compute. That is a 3.5x leap over Blackwell’s FP8 performance.

Training vs. Inference: The 4-Bit Reality

When comparing NVFP4 precision vs FP8 training, the main concern used to be “gradient collapse”—where the AI stops learning because the numbers are too small to track. NVIDIA’s 2026 training recipe fixes this with Stochastic Rounding. Instead of rounding a number up or down normally, the chip uses a probabilistic “flip” that preserves the mathematical direction of the learning process.

| Metric | FP8 (Blackwell) | NVFP4 (Rubin R100) |

| Bit Width | 8-bit | 4-bit |

| Training Speed | 1x (Baseline) | 3.5x Faster |

| Accuracy Loss | < 0.1% | Identical Convergence |

| Memory Footprint | ~2GB per billion params | ~0.6GB per billion params |

This leap in NVFP4 precision vs FP8 training is why we are seeing a massive shift in the NVIDIA share price forecast 2026. By allowing models to train 3x faster on the same amount of electricity, NVIDIA has effectively tripled the world’s AI output overnight.

For developers setting up a solo content creator studio, this technical shift is what makes high-end AI affordable. It’s the difference between a $100/month API bill and a $10/month bill.

Sovereign AI: Impact on India’s Data Centers

India is currently in a “Sovereign AI” race. With the India IT Rules 2026 pushing for local data storage, the arrival of Rubin is timely.

Hyperscalers like Yotta and Tata Communications are already in the H2 2026 delivery queue for Rubin R100 instances. For the Indian developer, this means lower latency for localized LLMs and better support for Indic languages like Tamil and Hindi in real-time.

Conclusion: The Verdict for 2026

The NVIDIA Rubin R100 vs Blackwell B200 debate is officially settled. While Blackwell is a reliable workhorse for today’s LLMs, the Rubin architecture is the only choice for the “Agentic Future.” With the TSMC 3nm process for NVIDIA R100 platform and the breakthrough HBM4 memory integration, NVIDIA has once again reset the clock on what is possible in artificial intelligence.

For small creators, this means the tools we use—like Sora 2 for video or NotebookLM customization hacks—will become exponentially more powerful and cheaper to run.

Should You Wait for Rubin?

If you are a startup building simple chatbots, the ASUS ROG 2026 lineup or a Blackwell-based cloud instance is more than enough.

However, if you are building Agentic AI—autonomous systems that need to “think” and “act” simultaneously—the NVIDIA Rubin R100 is the only way forward. The combination of HBM4 memory and the Vera CPU 88-core Olympus specs makes Blackwell look like a prototype.